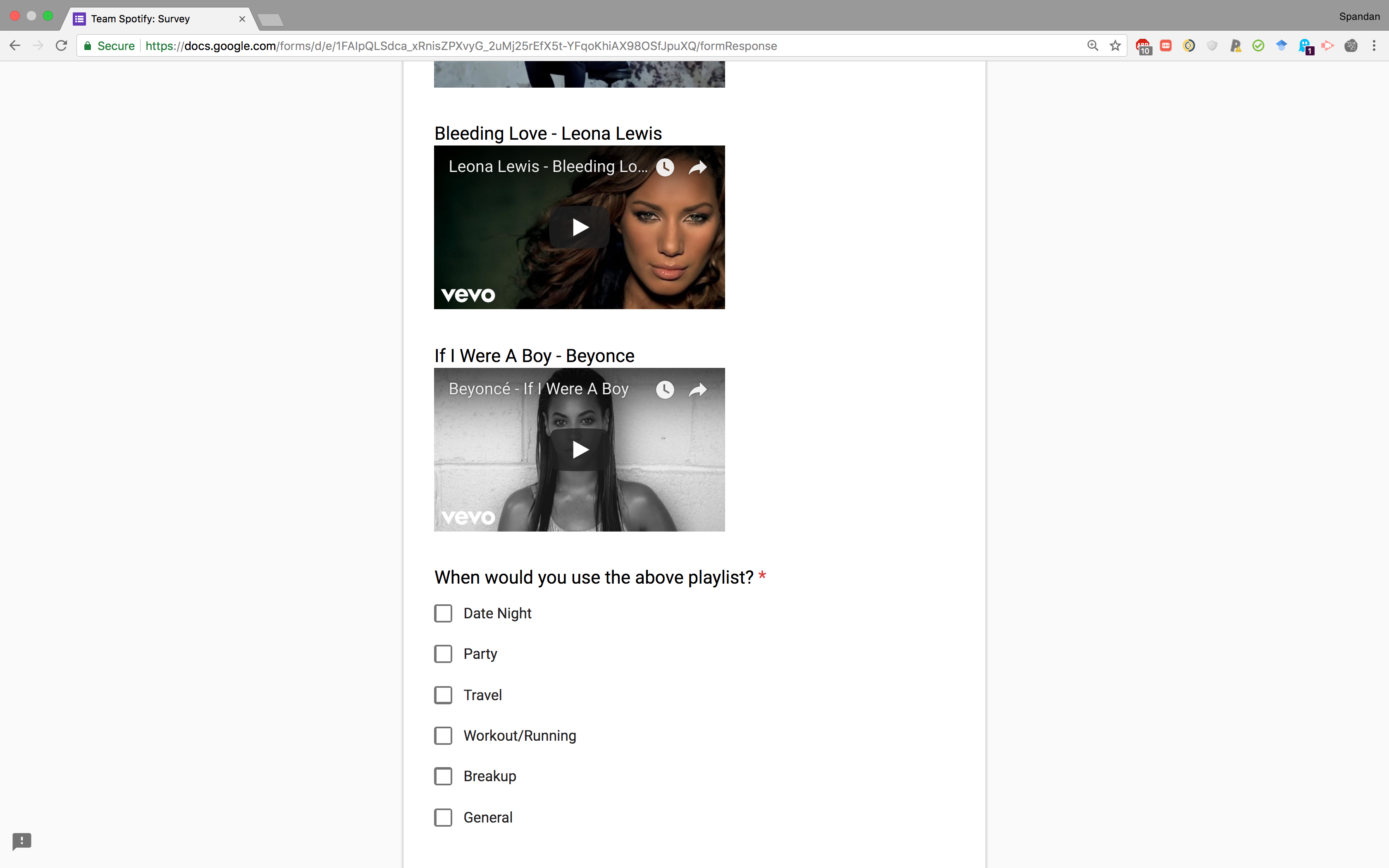

UI for collecting feedback

In order to obtain user feedback on our results, and as a means to obtain results for evaluation which respect the notion that there are multiple possible playlist continuations which all would be equally good to a user, we made a UI to collect information from users. Furthermore, this system also provides us with a way to see how utility driven playlists can be generated by tuning the weights of different features in our prediction model.

For instance, it is reasonable to assume that for a playlist which is about "break-ups", it would make more sense to suggest songs with similar lyrics. However, for a playlist for "working-out", sound features would be better. However, there are nuances of correlations between these features missed out in this assumption. By generating experiments that weigh one feature more than another, and seeing people's response to it, we can identify the optimal importance weights for the feature, given a particular target utility or mood. More details here.

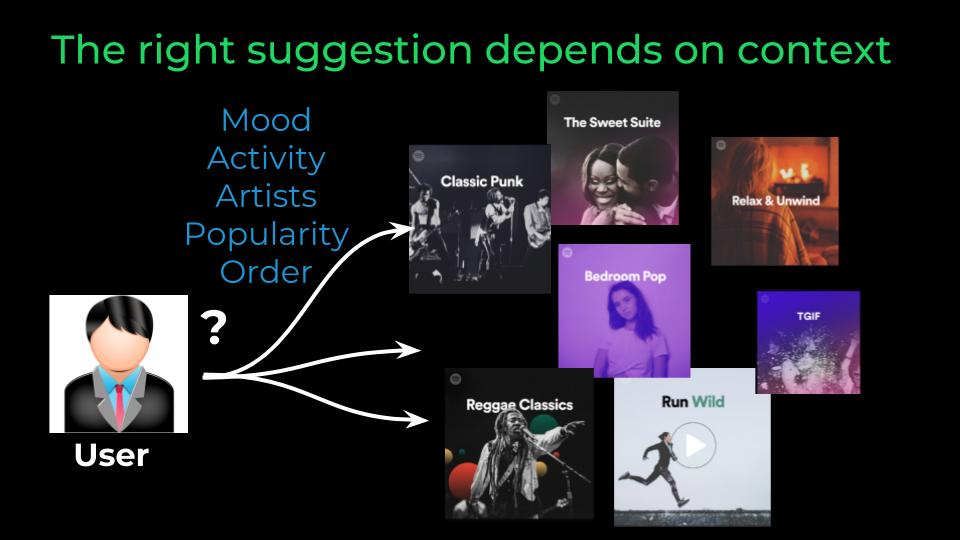

Given a user's playlist, we suggest songs to extend that playlist.

Given a user's playlist, we suggest songs to extend that playlist.